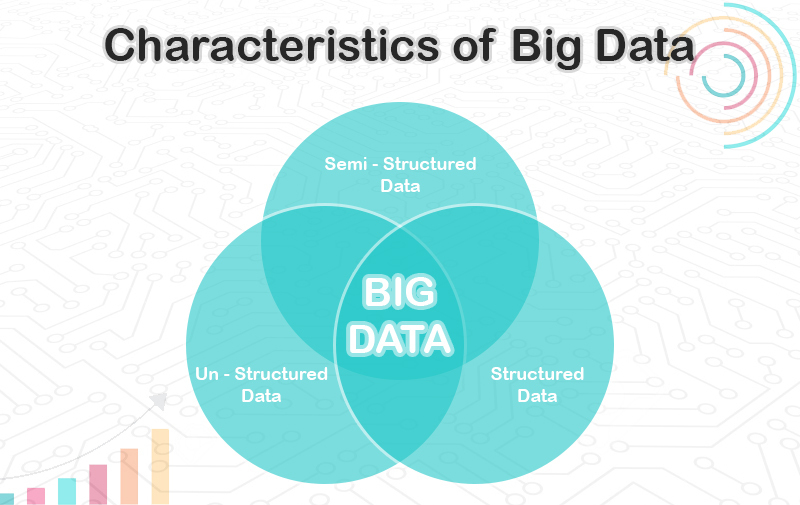

Big Data is an excellent combination of structured, semi-structured, and unstructured data which is collected and stored to generate useful data and analysed information for various purposes like predictions and decision-making for the business.

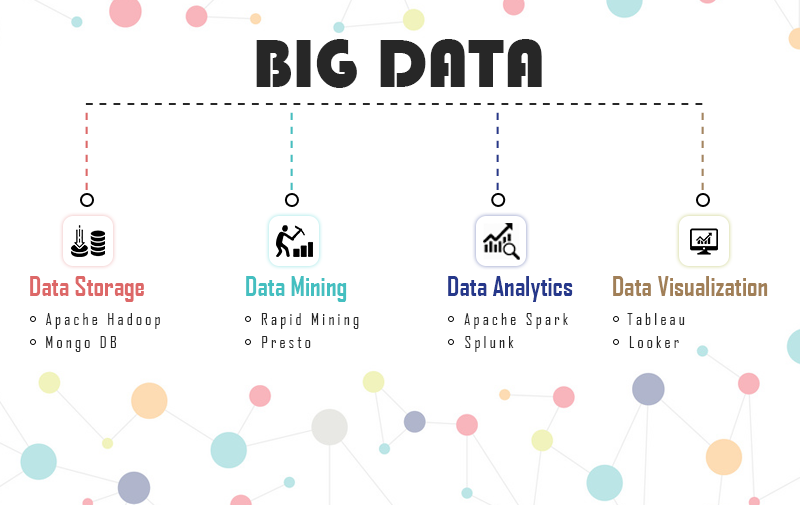

Further, the Big Data will be divided into 4 major parts which are listed below:

Data Storage:

Big Data is capable of extracting, storing, and managing data that deals with data storage and processing them. Mainly all the other data storage platform is cooperative with the other program as well. But 2 most important tools are used for the following:

Apache Hadoop: It is open-source software widely used tool in the market for data storage and processing in the distributed computing environment across the hardware cluster which is the fastest for data processing.

Mongo DB: This is a NoSQL database that is used for a large volume of data using a basic unit of data (Key value pairs). Mongo DB is written in C, C++, and JavaScript technology because it is capable of managing and storing data with ease.

Data Mining:

The mining of the data here will be capable of extracting patterns and trends of the raw data with ease. For the mining of the data 2 major tools are used i.e.,

RapidMiner: This tool helps the user to build/generate the prediction model. The main strength of the tool is the processing and preparation of the data and also the building of the machine/deep learning models. This is the end-to-end model which allowed for both options across the various organizations.

Presto: It is a query engine that runs analytic queries against the large dataset on its system. Here, the data will be combined data from the various organization and will able to perform the analytics on them in a click.

Data Analytics

Here the data analytic tools are used for wiping up the data and transforming the data into the information which will be used for business decision-making. For the data analysis, 2 main tools are used i.e.,

Apache Spark: This tool is faster than Hadoop and also more efficient than that for data analysis. The reason it is fast is that it uses the RAM instead of using the batches via MapReduce. It supports a huge variety of data analytics tasks and queries.

Splunk: It is very helpful in the generation of graphs, charts, and dashboards and is also capable of incorporating AI (Artificial Intelligence) as per the requirements.

Data Visualization

This is the final stage where the representation of the data will be performed in a great way so that any user will able to understand it easily. For this majorly 2 tools are used i.e.,

Tableau: This is the best and very effective tool in the market today which provides an easy drag-and-drop interface. The user will able to generate pie charts, bar charts, box plots, Gantt charts, and many more and also able to get a great visualization experience in real-time.

Looker: It is the BI (Business Intelligence) tool that provides the sense to the big data analytics and then shares those insights with other teams.

How Big Data Works?

Big Data is usually characterized as unstructured data, semistructured data, and structured data. Talking about structured data, there are easy to manage in the database and spreadsheet which are usually in numeric forms. The structured data are easy to understand.

In another hand, unstructured data are unorganized and do not have any format or pre-determined way to understand that.

To provide sense and meaning to any data they have to pass through the 4 major tools data storage, mining, analysis, and visualization which can represent the data and allow its users to store them.

Advantages of Big Data:

Big data are very useful in various fields like medicine, IT (Information Technology), Industries, sports, and many more. They provide the following features:

- A better decision-making process.

- Customer service gets improves.

- Easy to detect Fraud

- Reduces the cost of the business process with easy methods.

- Helpful in increasing the productivity of the business

Disadvantages of Big Data

Everything in this world has pros and cons, and so does Big data. Below are the mentioned few points about the disadvantages of big data:

- Lack of Technical Knowledge or talent in the field.

- Security Risk involved with the data.

- Compliance